Chapter 25: Advanced Shell Scripting: awk for Text Processing

Chapter Objectives

By the end of this chapter, you will be able to:

- Understand the fundamental architecture and operational model of the

awkutility. - Implement

awkscripts that use patterns, actions, and control structures to parse complex text data. - Configure and utilize field separators, built-in variables, and functions to manipulate and extract specific data from log files and command outputs.

- Develop complete shell scripts that integrate

awkfor generating formatted reports from raw system data on a Raspberry Pi 5. - Debug common

awkscripting errors related to quoting, variable scope, and pattern matching. - Apply

awkto practical embedded systems tasks, such as analyzing kernel messages, monitoring resource usage, and processing sensor data logs.

Introduction

In the world of embedded Linux, developers are constantly interacting with text-based data. System logs, kernel messages, configuration files, and the output of diagnostic tools are all streams of text that hold vital clues about a system’s behavior. While tools like grep and sed are excellent for searching and simple substitutions, they often fall short when more complex, field-based data manipulation is required. This is where awk emerges as an indispensable tool in the embedded developer’s arsenal.

awk is not merely a command; it is a powerful, data-driven programming language designed specifically for text processing. Its ability to recognize data organized into records (typically lines) and fields (typically words separated by whitespace) makes it exceptionally suited for transforming raw, unstructured log data into structured, actionable information. Imagine needing to quickly calculate the average response time from a device driver’s debug output, generate a summary of network packet types from a tcpdump log, or filter specific error codes from thousands of lines of kernel messages on your Raspberry Pi 5. awk handles these tasks with an elegance and efficiency that is difficult to achieve with standard shell scripting alone. This chapter will move beyond simple one-liners and explore awk as a full-fledged scripting language, empowering you to build sophisticated data extraction and reporting tools essential for modern embedded systems development and analysis.

Technical Background

To truly master awk, one must look beyond its command-line invocation and understand it as a complete programming environment. The name awk is derived from the surnames of its authors: Alfred Aho, Peter Weinberger, and Brian Kernighan. Developed at Bell Labs in the 1970s, its design philosophy was to create a tool that could handle text-processing tasks that were too complex for sed but for which writing a full C program felt like overkill. The result was a Turing-complete language that seamlessly integrates with the Unix/Linux shell, operating on a simple yet powerful paradigm: pattern-action pairs.

The awk Operational Model: A Data-Driven Engine

At its core, awk reads its input one record at a time. By default, a record is a single line of text, terminated by a newline character. For each record it reads, awk scans through a series of pattern { action } rules that you provide in your script. If the current record matches the pattern, awk executes the corresponding action. If no pattern is provided, the action is performed for every record. Conversely, if no action is provided, the default action is to print the entire record (print $0), but only if it matches the pattern. This simple loop—read a record, test patterns, execute actions—is the engine that drives all awk programs.

%%{ init: { 'theme': 'base', 'themeVariables': { 'fontFamily': 'Open Sans' } } }%%

graph TD

subgraph AWK Processing Engine

direction TB

A[Input File<br><i>e.g., /var/log/syslog</i>] --> B{"Read Record<br><i>(Line by Line)</i>"};

B --> C{Test All<br>Pattern-Action Rules};

C -->|Pattern Matches?| D["Execute Action<br><i>e.g., { print $3, $1 }</i>"];

C -->|No Match| B;

D --> B;

B -->|End of File| E[Formatted Output];

end

%% Styling

style A fill:#1e3a8a,stroke:#1e3a8a,stroke-width:2px,color:#ffffff

style B fill:#0d9488,stroke:#0d9488,stroke-width:1px,color:#ffffff

style C fill:#f59e0b,stroke:#f59e0b,stroke-width:1px,color:#ffffff

style D fill:#8b5cf6,stroke:#8b5cf6,stroke-width:1px,color:#ffffff

style E fill:#10b981,stroke:#10b981,stroke-width:2px,color:#ffffff

Furthermore, awk automatically parses each record into fields. By default, fields are sequences of non-whitespace characters separated by one or more spaces or tabs. awk makes these fields directly accessible within your script through special variables: $1 refers to the first field, $2 to the second, and so on. The variable $0 is reserved to represent the entire, unmodified record. This automatic field splitting is awk‘s most defining feature and the primary source of its power. It transforms a line of text into a structured collection of data that can be manipulated, compared, and rearranged.

For instance, consider a line from the output of the ls -l command:

-rwxr-xr-x 1 pi pi 4096 Jul 7 15:30 my_script.sh

To awk, this is not just a string of characters. It is a record that it automatically breaks down into nine fields. $1 is -rwxr-xr-x, $5 is 4096 (the file size), and $9 is my_script.sh (the filename). This allows you to write simple actions like { print $9, $5 } to instantly create a report of filenames and their sizes, without any manual parsing logic.

Special Patterns: BEGIN and END

While most awk logic operates on records from the input, there are two special patterns that provide hooks for initialization and finalization: BEGIN and END.

The action associated with the BEGIN pattern is executed before awk reads the very first record from its input. This makes it the ideal place for tasks such as initializing variables, printing report headers, or setting the field separator. For example, you might want to create a report with a title and column headings. The BEGIN block ensures this header is printed only once at the very start.

BEGIN { print "System Log Report" }

Conversely, the action associated with the END pattern is executed after the very last record has been read and processed. This is invaluable for post-processing tasks like calculating and printing totals, averages, or summary statistics that depend on the entire dataset having been seen. If you were counting the number of error messages in a log file, the END block would be where you print the final count.

END { print "Total errors found:", error_count }

A complete awk script often has a three-part structure: a BEGIN block for setup, a main body of pattern-action rules for processing each record, and an END block for summarizing the results. This structure provides a clean and powerful framework for a vast range of text-processing tasks.

Controlling Field and Record Separation: FS and RS

While awk‘s default behavior of using whitespace to separate fields and newlines to separate records is convenient, it is not always sufficient. Embedded system logs and configuration files often use other delimiters, such as commas, colons, or pipes. awk provides built-in variables to control this behavior.

The Field Separator, controlled by the FS variable, dictates how awk splits records into fields. While it can be set on the command line using the -F option (e.g., awk -F':'), it is often more readable and maintainable to set it within the BEGIN block of a script. For example, to process the /etc/passwd file, which uses colons as delimiters, you would set FS = ":".

The Record Separator, controlled by the RS variable, determines what separates one record from the next. The default is the newline character. However, in some cases, records might be separated by a blank line, a specific character, or even a multi-character string. For instance, if you have a data file where records are separated by a double newline (a blank line), you could set RS = "". This capability allows awk to process multi-line records as a single unit, a powerful feature for parsing complex data formats.

Built-in Variables: awk‘s Internal State

Beyond FS and RS, awk maintains a host of other built-in variables that provide context about the processing state. Understanding these is key to writing sophisticated scripts. Some of the most important include:

NR(Number of Records): This variable holds the cumulative count of records read so far from all input files. It starts at 1 and increments for each record. It is invaluable for numbering lines or performing actions only on specific record numbers.FNR(File Number of Records): Similar toNR, but it resets to 1 at the beginning of each new input file. This is crucial when processing multiple files to detect whenawkhas started reading a new file (i.e., whenFNR == 1).NF(Number of Fields): This variable contains the number of fields in the current record. It is re-calculated for every record. It’s often used to check if a record has the expected structure before processing it (e.g.,if (NF == 9)).FILENAME: This variable holds the name of the current input file being processed.OFS(Output Field Separator): This variable specifies the separator to be used between fields in the output. By default, it’s a single space. When you useprint $1, $2,awkinserts the value ofOFSbetween the two fields. SettingOFS = ","in aBEGINblock would causeawkto generate comma-separated output.ORS(Output Record Separator): This variable defines the string thatawkprints at the end of eachprintstatement. By default, it is a newline (\n).

These variables give the scriptwriter immense power to control both the parsing of input and the formatting of output, all from within the awk script itself.

Patterns, Regular Expressions, and Relational Expressions

The “pattern” part of a pattern { action } pair is what gives awk its data-filtering capabilities. A pattern is an expression that evaluates to either true or false. If true, the action is executed. There are several types of patterns.

1. Regular Expressions: The most common type of pattern is a regular expression, enclosed in slashes (/). The action is executed if the current record ($0) contains a substring that matches the regular expression. For example, the pattern /ERROR/ will match any line containing the word “ERROR”. You can also match a regex against a specific field using the ~ (match) and !~ (does not match) operators. For instance, $3 ~ /critical/ checks if the third field contains the word “critical”.

2. Relational Expressions: You can use standard comparison operators (<, <=, ==, !=, >=, >) to form patterns based on the values of fields or variables. For example, the pattern NR > 100 would apply its action only to records after the 100th line. A more practical example in an embedded context might be $4 > 1024, which could be used to find processes using more than 1KB of memory from the output of ps.

3. Range Patterns: A range pattern consists of two patterns separated by a comma, like pattern1, pattern2. It matches all records starting from the first record that matches pattern1 up to and including the record that matches pattern2. This is useful for extracting sections of a file, such as the content between a START_LOG and END_LOG marker.

4. Compound Patterns: Patterns can be combined using the logical operators && (AND), || (OR), and ! (NOT) to create more complex conditions. For example, $2 == "kernel" && /error/ would match only lines where the second field is exactly “kernel” and the line also contains the word “error”.

Control Flow and Scripting Constructs

awk is not limited to simple pattern-action rules; it includes control flow statements that enable more complex algorithmic logic, just like a traditional programming language.

if-elsestatements: These work as you would expect, allowing conditional execution of code within an action block.

{

if ($3 > 100) {

print "High value detected:", $0

} else {

print "Normal value:", $0

}

}- Loops (

while,do-while,for):awksupports C-style loops for iterating within an action. Theforloop is particularly useful for iterating over the fields of a record.

{

for (i = 1; i <= NF; i++) {

print "Field", i, "is", $i

}

}- Arrays:

awksupports associative arrays, which are incredibly powerful. Array indices can be numbers or strings. This allows you to use data from the input to create dynamic data structures. For example, you could count the occurrences of different error types witherror_counts[$3]++, where$3contains the error message. This single line builds a frequency map, a task that would require significantly more code in a standard shell script.

By combining these elements—the record-field processing model, BEGIN/END blocks, built-in variables, powerful patterns, and familiar control structures—awk provides a complete and robust environment for text processing. On a resource-constrained embedded device like the Raspberry Pi 5, its efficiency and power make it an ideal choice for on-device log analysis, data filtering, and report generation.

Practical Examples

Theory provides the foundation, but true understanding comes from practice. In this section, we will apply our knowledge of awk to solve realistic problems an embedded systems developer might face when working with a Raspberry Pi 5. We will progress from simple log parsing to more complex report generation, demonstrating how to build and execute awk scripts.

Example 1: Parsing Kernel Messages with dmesg

The dmesg command prints the kernel’s ring buffer, which contains invaluable diagnostic information about drivers, hardware detection, and system errors. The output can be verbose, and awk is the perfect tool to distill it into a readable summary.

Our goal is to parse the dmesg output to extract messages related to the USB subsystem, format them with a clear timestamp, and highlight any lines containing the words “error” or “fail”.

Build and Configuration Steps:

No special hardware is needed for this example. We will work entirely from the command line of the Raspberry Pi 5.

1. Create the awk script file. Using a text editor like nano or vim, create a file named parse_usb_log.awk.

nano parse_usb_log.awk

2. Write the awk script. Enter the following code into the file. The comments explain each part of the script.

# parse_usb_log.awk

#

# This script parses the output of `dmesg` to find messages related to USB,

# formats the output, and flags potential issues.

BEGIN {

# Set the Output Field Separator to a tab for clean alignment.

OFS="\t";

# Print a header for our report. This runs only once at the start.

print "Timestamp", "Device/Driver", "Message";

print "---------", "-------------", "-------";

}

# This is the main processing rule. It triggers on any line containing "usb".

# The pattern is case-insensitive due to the ignorecase setting.

/usb/ {

# The timestamp in dmesg is the first field, e.g., "[ 123.456789]".

# We remove the brackets using the gsub() function for a cleaner look.

# gsub(regex, replacement, target_string)

gsub(/\[|\]/, "", $1);

# The source of the message (e.g., "kernel:") is often the third field.

# We remove the trailing colon.

gsub(/:/, "", $3);

# Reconstruct the message from the 4th field to the end.

message = "";

for (i = 4; i <= NF; i++) {

message = message " " $i;

}

# Remove leading space from the reconstructed message

sub(/^ /, "", message);

# Print the formatted output: timestamp, source, and the message.

print $1, $3, message;

# Add an additional check for common error-related keywords.

# If the line contains "error" or "fail", print a warning.

if ($0 ~ /error|fail/i) { # The 'i' flag makes the match case-insensitive

print "--> URGENT: Potential issue detected on this line!";

}

}

END {

# This block runs after all lines have been processed.

print "\n--- USB Log Analysis Complete ---";

# NR holds the total number of lines processed by awk.

print "Scanned a total of", NR, "kernel messages.";

}

Execution and Expected Output:

1. Run the script. We will pipe the output of dmesg directly into our awk script using the -f flag to specify the script file.

dmesg | awk -f parse_usb_log.awk2. Analyze the output. The output will be a neatly formatted table showing only the USB-related messages.

Timestamp Device/Driver Message

--------- ------------- -------

1.234567 kernel usbcore: registered new interface driver usbfs

1.234589 kernel usbcore: registered new interface driver hub

1.234678 kernel usbcore: registered new device driver usb

2.567890 kernel usb 1-1: new high-speed USB device number 2 using xhci_hcd

2.789012 kernel usb 1-1: New USB device found, idVendor=2109, idProduct=3431, bcdDevice= 4.21

...

5.123456 kernel usb 1-1.2: device descriptor read/64, error -71

--> URGENT: Potential issue detected on this line!

--- USB Log Analysis Complete ---

Scanned a total of 1542 kernel messages.This example demonstrates the power of combining BEGIN for headers, a main pattern for filtering and formatting, if conditions for deeper analysis within an action, and END for a final summary.

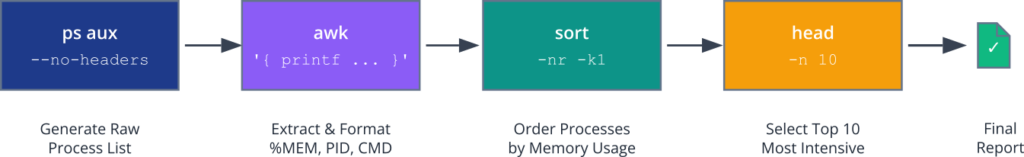

Example 2: Generating a System Resource Report

Embedded systems often run headless, and it’s crucial to monitor their resource usage (CPU, memory) remotely. We can create a script that uses ps, awk, and sort to generate a “top 10” report of the most memory-intensive processes.

Build and Configuration Steps:

1. Create the shell script. This time, we will embed the awk command within a larger shell script for better integration. Create a file named mem_report.sh.

nano mem_report.sh2. Write the script. This script will use a command pipeline. ps generates the process data, awk extracts and formats it, sort orders it, and head selects the top entries.

#!/bin/bash

# mem_report.sh

#

# Generates a report of the top 10 processes by memory usage.

echo "--- Memory Usage Report for Raspberry Pi 5 ---"

echo "Generated on: $(date)"

echo ""

# Use ps to list all processes with user, pid, %mem, and command.

# aux = all users, user-oriented format, include processes without a tty.

# --no-headers removes the header line from ps output, so awk doesn't process it.

ps aux --no-headers | \

# The pipe sends the output of ps to awk.

awk '

# This awk script processes each line from ps.

{

# ps aux output format is:

# USER PID %CPU %MEM VSZ RSS TTY STAT START TIME COMMAND

# $1 $2 $3 $4 ... $11...

# We want to print the Memory % ($4), PID ($2), and the command ($11 to end).

# Reconstruct the command string, as it may contain spaces.

command = "";

for (i = 11; i <= NF; i++) {

command = command $i " ";

}

# Use printf for more controlled, C-style formatting.

# %-8s: left-aligned string, 8 chars wide

# %-10s: left-aligned string, 10 chars wide

# %s: string

# \n: newline

printf "%-8s %-10s %s\n", $4, $2, command;

}

' | \

# Pipe the formatted output from awk to the sort command.

# -nr: sort numerically (-n) and in reverse order (-r).

# -k1: sort based on the first column (%MEM).

sort -nr -k1 | \

# Take the top 10 lines from the sorted output.

head -n 10

echo ""

echo "--- End of Report ---"Execution and Expected Output:

1. Make the script executable.

chmod +x mem_report.sh2. Run the script.

./mem_report.sh3. Examine the output. The result is a clean, sorted report showing exactly what we need.

--- Memory Usage Report for Raspberry Pi 5 ---

Generated on: Tue Jul 8 22:15:01 UTC 2025

%MEM PID COMMAND

4.5 1234 /usr/lib/firefox/firefox -contentproc ...

2.1 890 /usr/sbin/Xorg -core :0 -seat seat0 ...

1.8 950 lxpanel --profile LXDE-pi

1.5 1100 pcmanfm --desktop --profile LXDE-pi

... (and 6 more lines)

--- End of Report ---This example showcases how awk fits perfectly into the Unix philosophy of small tools doing one thing well, chained together in a pipeline to achieve a complex result. The awk script here acts as a powerful data transformation filter.

Example 3: Processing CSV Sensor Data

A common task in embedded systems is logging data from sensors. Let’s assume we have a sensor connected to the Raspberry Pi 5’s GPIO pins that logs temperature and humidity data to a CSV file every minute.

Hardware Integration (Conceptual):

Imagine a DHT22 sensor connected to the Raspberry Pi 5. A Python script runs in the background, reading from the sensor and appending data to /var/log/sensor_data.csv.

File Structure Example:

The file /var/log/sensor_data.csv would look like this:

# Timestamp (Unix Epoch),Temperature (C),Humidity (%)

1678886400,22.5,45.1

1678886460,22.6,45.0

1678886520,22.5,45.2

1678886580,24.0,44.9

1678886640,22.7,45.3

Our goal is to write an awk script that processes this file to calculate the average temperature and humidity, and also flag any temperature readings above a certain threshold (e.g., 23°C).

Build and Configuration Steps:

1. Create a sample data file. For testing, let’s create the log file.

mkdir -p /tmp/log

cat << EOF > /tmp/log/sensor_data.csv

# Timestamp (Unix Epoch),Temperature (C),Humidity (%)

1678886400,22.5,45.1

1678886460,22.6,45.0

1678886520,22.5,45.2

1678886580,24.0,44.9

1678886640,22.7,45.3

1678886700,21.9,46.0

EOF2. Create the awk analysis script. Create a file named analyze_sensors.awk.

#!/usr/bin/awk -f

# analyze_sensors.awk

#

# Processes a CSV file of sensor data to calculate averages and flag anomalies.

BEGIN {

# Set the Field Separator to a comma for CSV parsing.

FS = ",";

# Initialize variables for our calculations.

temp_sum = 0;

humidity_sum = 0;

record_count = 0;

HIGH_TEMP_THRESHOLD = 23.0;

print "--- Sensor Data Analysis ---";

}

# This pattern skips any line that starts with a '#' (comment) or is empty.

# This is a robust way to handle header lines or blank lines in data files.

/^#/ || /^$/ {

# The 'next' statement tells awk to immediately stop processing the

# current record and move to the next one.

next;

}

# This is the main action block, which runs for every valid data record.

{

# Add the current values to our running totals.

# The '+' before the field name explicitly treats it as a number.

temp_sum += $2;

humidity_sum += $3;

record_count++; # Increment the count of valid records.

# Check if the temperature exceeds our defined threshold.

if ($2 > HIGH_TEMP_THRESHOLD) {

# The strftime function formats a Unix timestamp ($1) into a human-readable string.

human_time = strftime("%Y-%m-%d %H:%M:%S", $1);

printf "WARNING: High temperature of %.1f C detected at %s\n", $2, human_time;

}

}

END {

# After processing all records, calculate and print the averages.

print "\n--- Summary Report ---";

if (record_count > 0) {

avg_temp = temp_sum / record_count;

avg_humidity = humidity_sum / record_count;

printf "Processed %d valid data records.\n", record_count;

printf "Average Temperature: %.2f C\n", avg_temp;

printf "Average Humidity: %.2f %%\n", avg_humidity;

} else {

print "No valid data records found.";

}

print "--- Analysis Complete ---";

}Execution and Expected Output:

1. Run the script against our sample data file.

awk -f analyze_sensors.awk /tmp/log/sensor_data.csv2. The output will provide both the real-time warning and the final summary.

--- Sensor Data Analysis ---

WARNING: High temperature of 24.0 C detected at 2023-03-15 13:23:00

--- Summary Report ---

Processed 6 valid data records.

Average Temperature: 22.70 C

Average Humidity: 45.25 %

--- Analysis Complete ---This final example demonstrates a complete data analysis workflow: setting a custom field separator, skipping headers, performing calculations on each record, using conditional logic to find anomalies, and using the END block to present a comprehensive summary. This is a pattern that can be adapted to countless embedded data logging scenarios.

Common Mistakes & Troubleshooting

awk is powerful, but its syntax can be subtle. Newcomers often encounter a few common pitfalls. Understanding these ahead of time can save hours of debugging.

Exercises

These exercises are designed to reinforce the concepts covered in this chapter. They range from simple filtering to building a more complex analysis script.

- Network Connection Report: The

netstat -tulncommand on your Raspberry Pi 5 lists all listening TCP and UDP sockets. The output looks something like this:Proto Recv-Q Send-Q Local Address Foreign Address State tcp 0 0 0.0.0.0:22 0.0.0.0:* LISTEN udp 0 0 0.0.0.0:68 0.0.0.0:*Objective: Write a singleawkcommand (not a script file) that processes the output ofnetstat -tuln. The command should:- Skip the header line.

- Print only the protocol (e.g.,

tcp), the local address and port (0.0.0.0:22), and the state (LISTEN), if available. - Label the output clearly. For example:

Protocol: tcp, Address: 0.0.0.0:22, State: LISTEN.

- Filesystem Usage Alerter: The df -h command shows filesystem usage.Objective: Write a shell script named check_disk.sh that uses df -h and awk to check the root filesystem (/). The script should:

- Identify the line corresponding to the root filesystem.

- Extract the percentage usage value (e.g.,

85%). - If the usage is 80% or higher, it should print a

WARNING: Root filesystem usage is critically high!message. - If the usage is below 80%, it should print an

INFO: Root filesystem usage is normal.message. - Hint: You will need to remove the

%from the usage field to perform a numeric comparison. Thesub()function is perfect for this.

- User Login Summary: The /var/log/auth.log file (or a similar file depending on the system configuration) records user logins, sudo attempts, and other authentication events. A successful login line might look like:Jul 8 21:50:01 raspberrypi sshd[12345]: Accepted password for pi from 192.168.1.100 port 54321 ssh2Objective: Write an awk script file named login_summary.awk that processes auth.log and generates a count of successful logins for each user.

- The script should only process lines containing “Accepted password for”.

- It should use an associative array to store a count for each username. The username is the 9th field in the example line above.

- The

ENDblock should iterate through the array and print a summary, like:Login Summary: User 'pi': 15 successful logins. User 'admin': 3 successful logins.

- Advanced Sensor Data Analysis: Building on the sensor data example, enhance the analyze_sensors.awk script.Objective: Modify the script to also find the minimum and maximum temperature and humidity recorded in the log file.

- In the

BEGINblock, initialize variables formin_temp,max_temp,min_humidity, andmax_humidity. You might need to initializeminvariables to a very large number andmaxvariables to a very small number (or to the values from the first data record). - In the main processing block, update these variables if the current record’s value is lower than the current min or higher than the current max.

- In the

ENDblock, print these new summary statistics along with the averages.

- In the

Summary

awkis a data-driven programming language that processes text files record by record (usually line by line).- The core operational model is based on

pattern { action }pairs. If a record matches the pattern, the action is executed. BEGINandENDare special patterns that allow for executing code before any records are read and after all records have been processed, respectively.awkautomatically splits records into fields (default: by whitespace), accessible via$1,$2, etc.$0represents the entire record.- The Field Separator (

FS) and Record Separator (RS) can be changed to parse different data formats, like CSV. - Built-in variables like

NR(Number of Records),NF(Number of Fields), andFILENAMEprovide crucial context within a script. awksupports regular expressions, relational expressions, and compound patterns for sophisticated data filtering.- It is a complete language with control structures (

if-else,for,while) and powerful associative arrays for complex data manipulation and aggregation. awkintegrates seamlessly into shell pipelines, acting as a powerful filter and transformation engine between other standard Linux commands.

Further Reading

- GAWK: Effective AWK Programming – The official manual for GNU

awk. This is the definitive reference for all features, functions, and syntax. - The AWK Programming Language by Alfred V. Aho, Brian W. Kernighan, and Peter J. Weinberger – The original book by the creators of

awk. While old, its explanation of the language’s design and philosophy is unparalleled. - Sed & Awk, 2nd Edition by Dale Dougherty and Arnold Robbins (O’Reilly) – A classic, practical guide that covers both

sedandawkwith numerous real-world examples. - Grymoire’s

awkTutorial – A well-regarded online tutorial that provides clear, simple examples for learning the fundamentals ofawk. - Linux Command Line and Shell Scripting Bible by Richard Blum & Christine Bresnahan – This book contains excellent chapters on text processing tools, including a very practical introduction to

awkin the context of shell scripting. - Raspberry Pi Documentation – While not

awk-specific, the official documentation provides context for the system logs and command outputs you will be parsing on your device.